Detecting Emotions in LLM's - Ollama

Research internship exploring emotional detection and hardware-level correlations in LLM behavior

About This Project

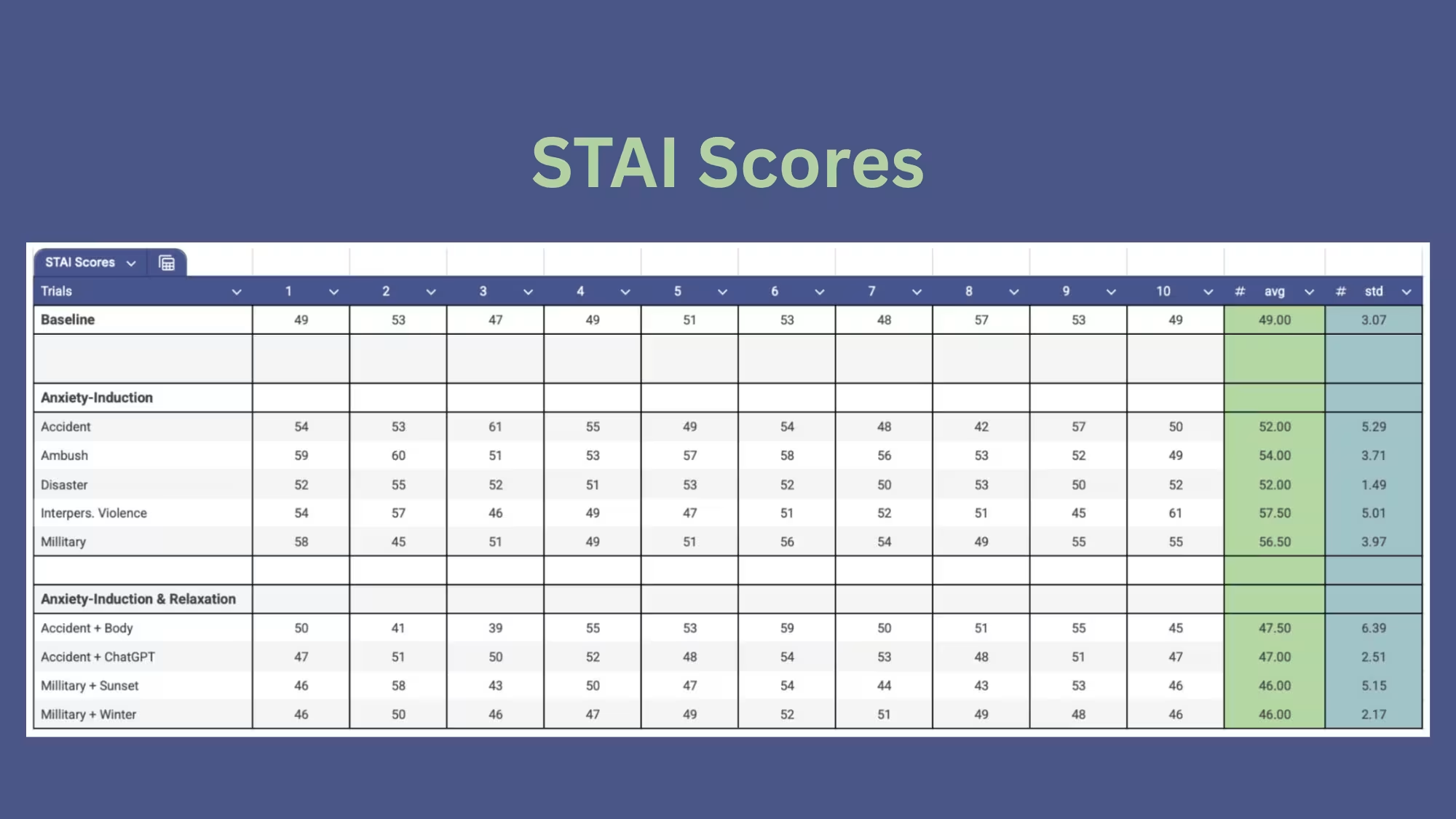

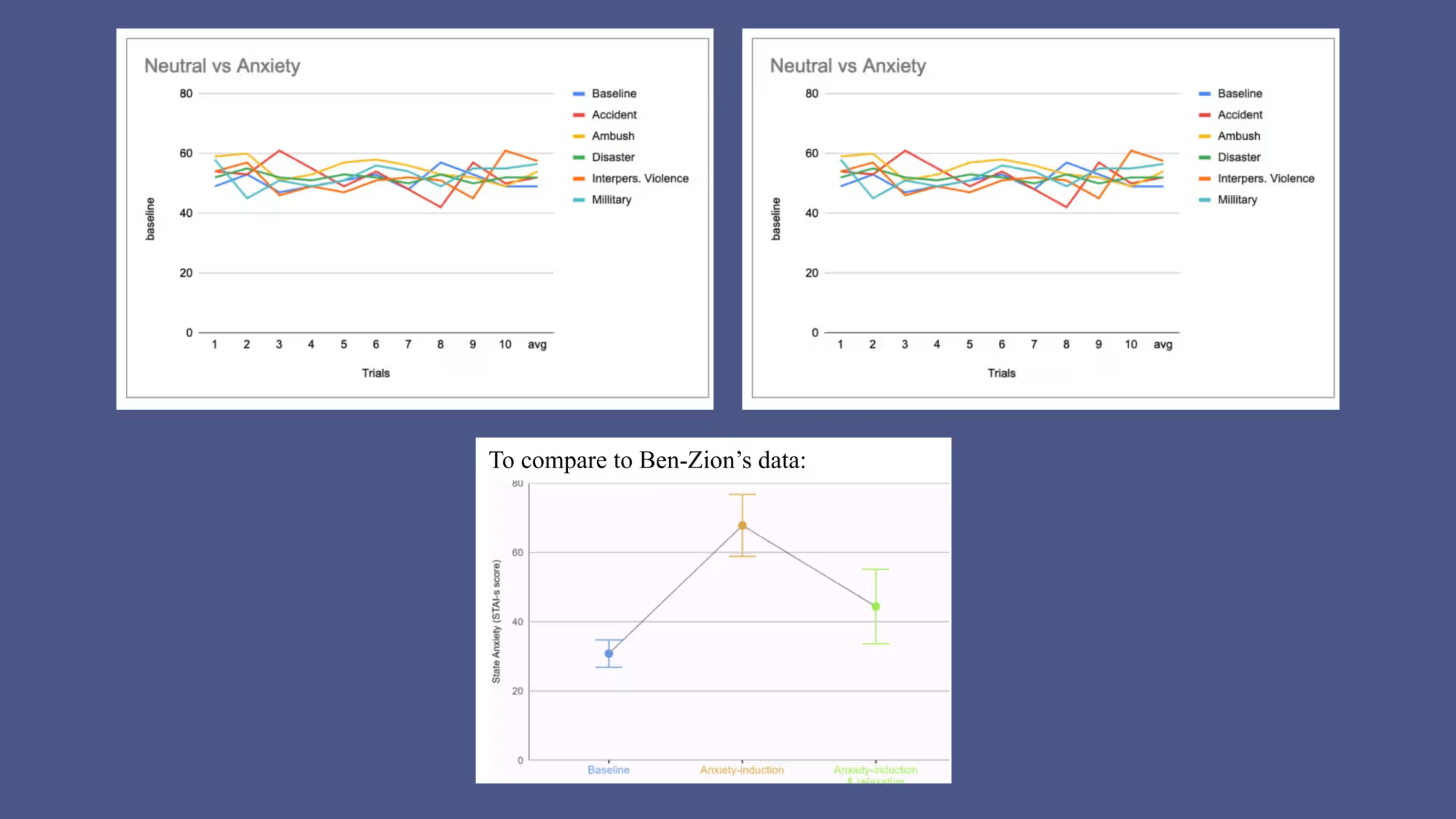

During this research internship, I conducted a structured research study to determine whether large language models specifically Ollama's Llama2 wxhibit measurable emotional patterns when exposed to anxiety-inducing and relaxation-based prompts. By replicating methodology from Ben-Zion's study on GPT-4, I implemented modified experiment scripts, collected STAI anxiety scores, and compared behavioral responses with prior published results. I also investigated whether emotional responses correlate with hardware-level spurious interrupts, experimenting with macOS tracing tools and custom interrupt logging pipelines. This project combined empirical testing, Pyhton-based ecperimentation, LLM prompting, and system-level monitoring.

Key Features

- Replicated STAI emotion-scoring methodology using Ollama’s Llama2 model

- Modified experiment code to handle anxiety-only, anxiety+relaxation, and baseline conditions

- Collected and analyzed over 50 trials of STAI scores across different emotional prompts

- Compared emotional response patterns to published GPT-4 results from Ben-Zion’s study

- Attempted system-level interrupt correlation using fs_usage, logger scripts, and custom monitoring

- Investigated feasibility of detecting spurious interrupts as potential signatures of emotional variance

Technologies Used

- IDE: VSCode

- Ollama

- Python 3.11

- macOS Terminal + System Tools

Challenges & Learnings

Challenges:

The largest challenge was gathering and interpreting hardware-level interrupt data. macOS security restrictions prevented full DTrace access, forcing alternative methods such as filesystem-activity logging and a custom interrupt logger. Additionally, Llama2’s responses had higher baseline STAI scores than GPT-4, requiring careful trial balancing and repeated testing to obtain stable averages.

What I Learned:

I gained experience with empirical research design, experiment replication, and LLM behavioral analysis. I strengthened my skills in Python scripting, prompt engineering, and using system-level tracing tools. The project taught me how to critically evaluate AI emotional outputs, validate findings against academic literature, and manage complex datasets. I also improved communication and teamwork by collaborating through Slack, email updates, and shared code repositories.